Team 404

Jill Minrong Hua- [icon name=”globe” prefix=”fas”]

Youya Huang – [icon name=”globe” prefix=”fas”]

Imagine how you are using navigation applications nowadays. You type in your destination and get your direction or you just get lost when going to a new place when those applications are not functioning properly.

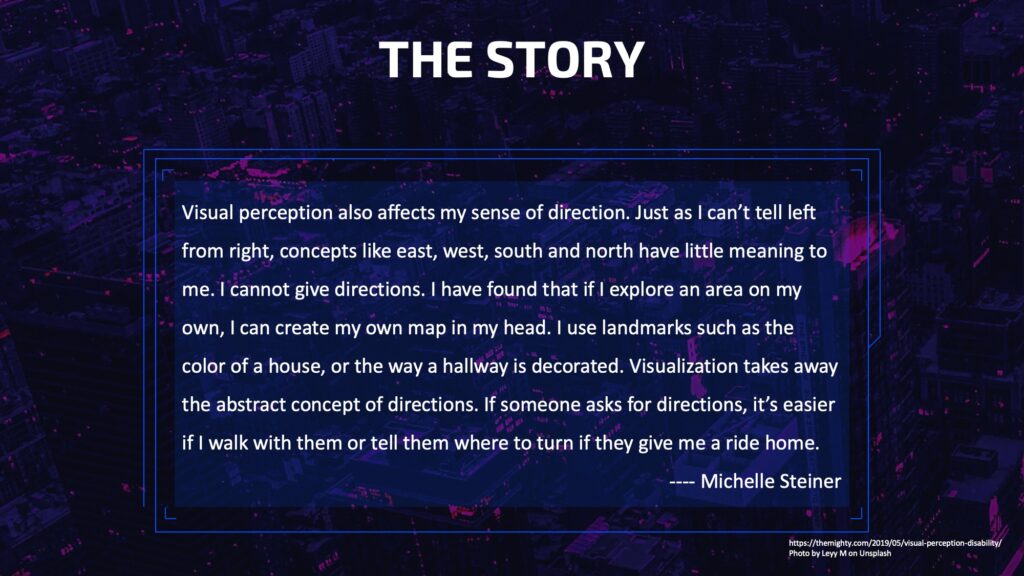

What is the case with people with learning disabilities? To them, navigation can be different. Why we want to focus on navigation is that during our research we found people with visual perceptual deficit, which is one type of learning disabilities, can lack spatial skills. That is to say, they can get lost easily and having trouble distinguishing directions like east, west, left and right.

How to better help people navigate, especially for those with learning disabilities, is the problem we want to solve.

Our concept is more like a design fiction. It is not possible with today’s technology but is likely to come true in the future.

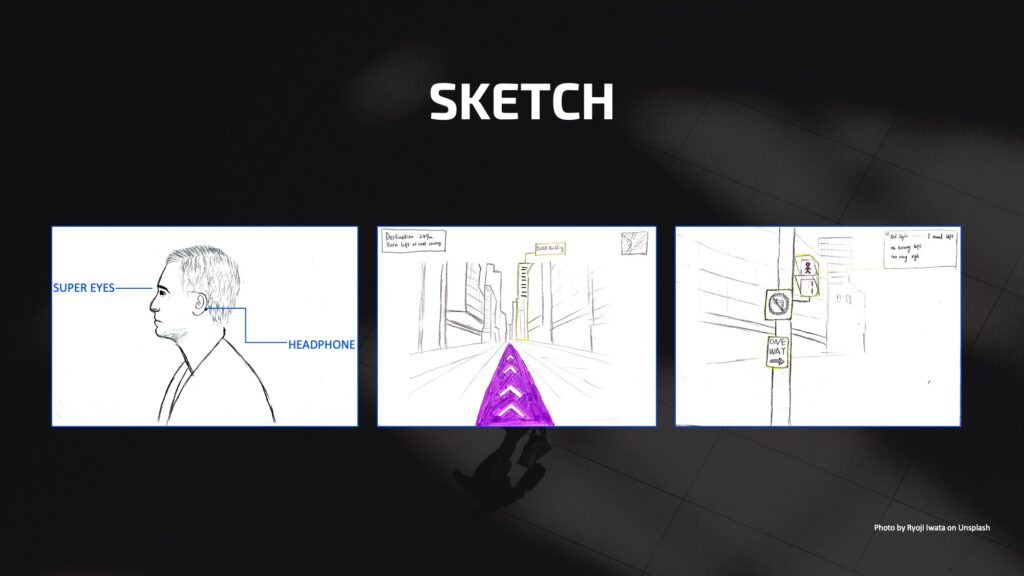

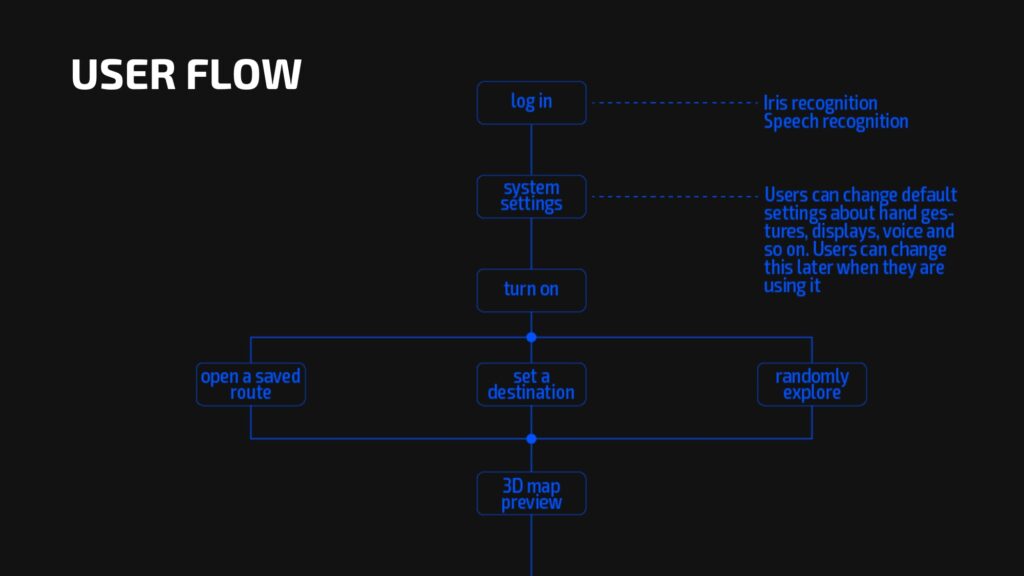

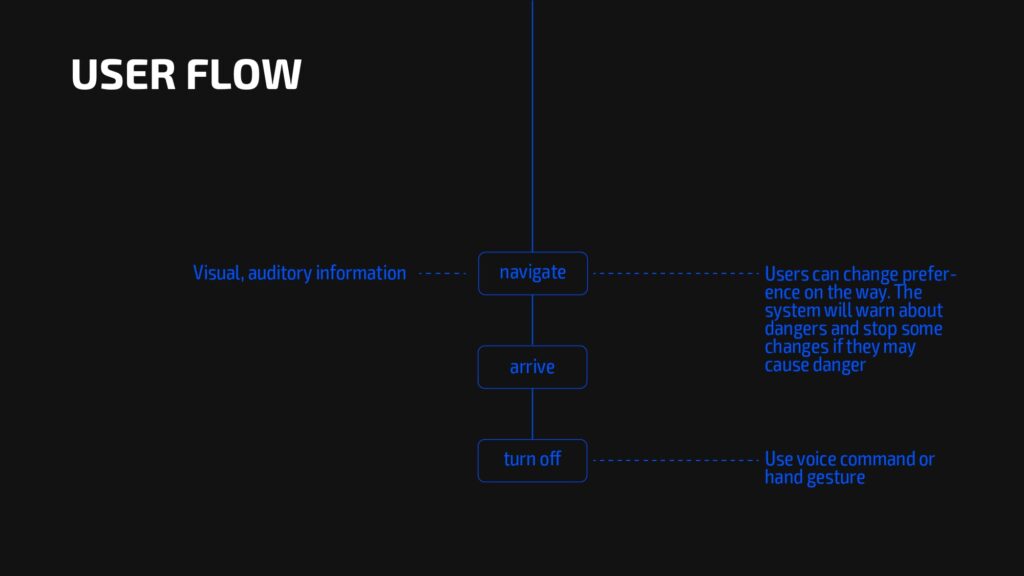

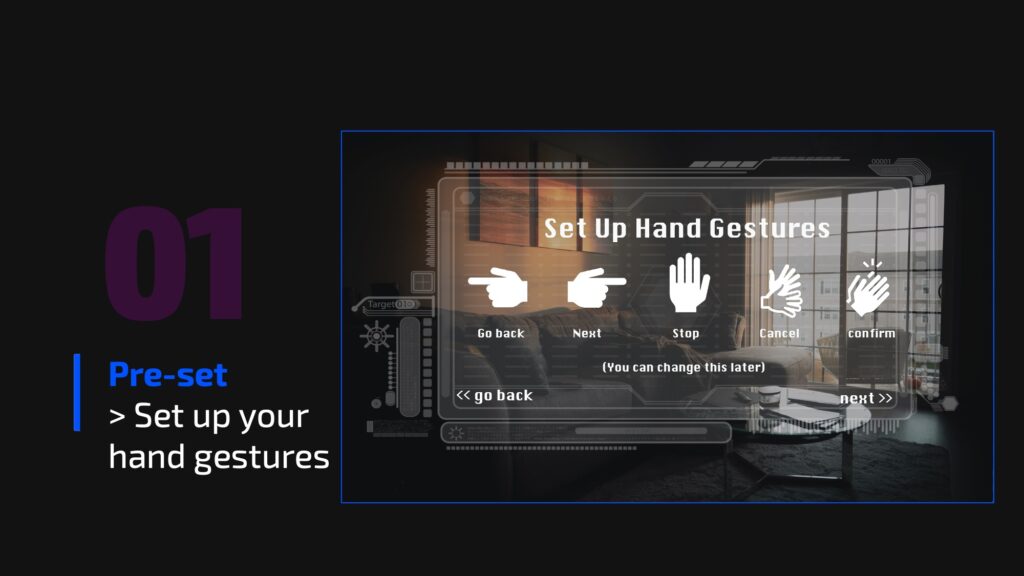

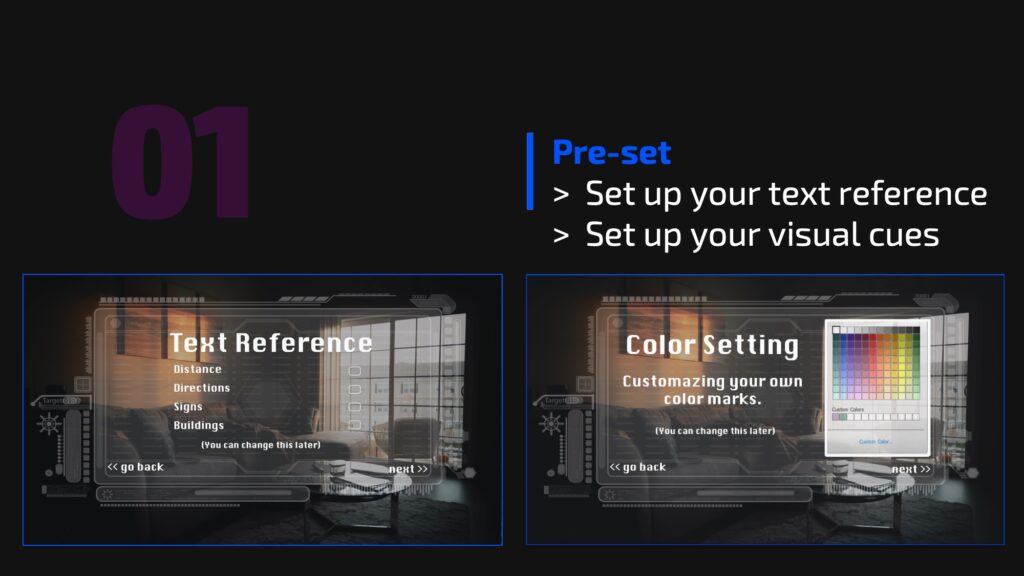

Our futurist design is a pair of contact lenses along with a bone-conduction headphone. It offers AR map and audio guidance. Users can customize the display of the map and audio assistance according to their preferences.

We came up with our concept SUPER EYES, the contact lenses with AR navigation and headphone, to offer people with visual perceptual deficit an alternative way to navigate. But later, we couldn’t find enough evidence of these people being able to use AR technology without a problem. So we changed our target users to a broader group of all people with learning disabilities.

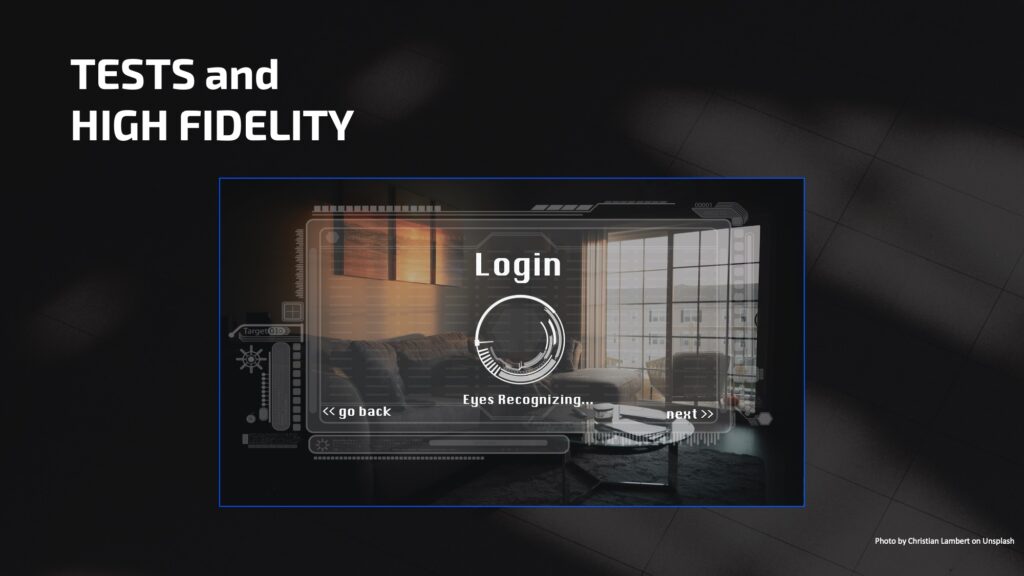

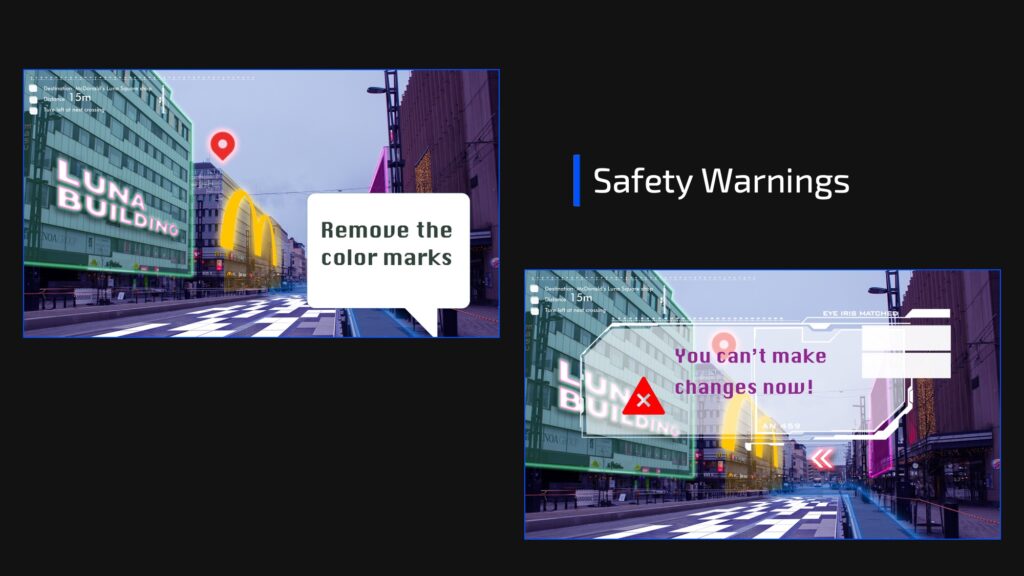

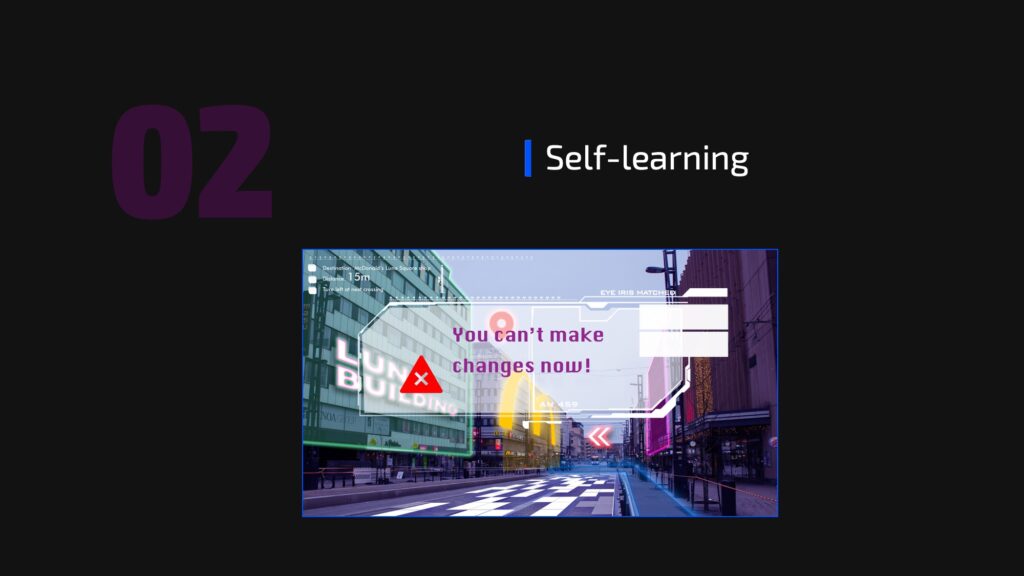

After we did all these tests. We made some improvements based on the feedback.

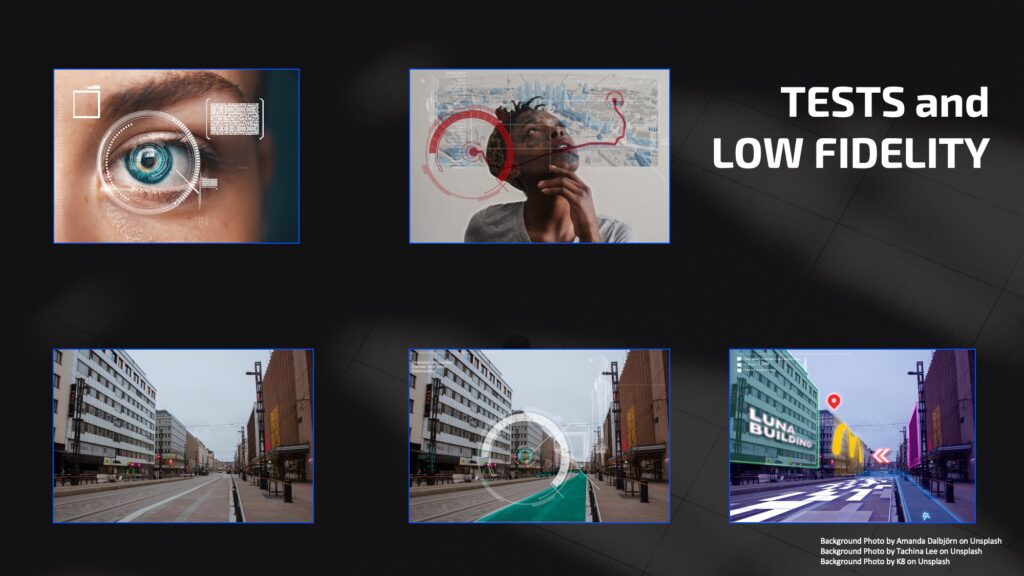

Compare to traditional map application or devices, for instance google map, users don’t have to look at the screen to see the routes and visual references. Looking at your phone screen while walking could cause serious safety issues.

Super Eyes add these marks and references directly onto the real world in a subtle and delicate way.

The device is invisible to other people. It protects users’ privacy.

The functions and feature could be adjusted based on each user’s personal needs and values.